AI diagnostics for digital dentistry

UX/UI design for a professional dental workflow product.

A refreshed version is in progress. You're welcome to look around, but some content and polish are still being finalized.

A mobile app that helps people find bars and cafés with sunny outdoor seating in Copenhagen.

In a city where sun defines an afternoon out, the difference between a sunny terrace and a shaded one is the whole reason for choosing one place over another. No existing tool answered that question from the street.

A mobile app that helps people find bars and cafés with sunny outdoor seating in Copenhagen.

The product is built around two ideas: that the right coordinate for a sun recommendation is the terrace, not the venue address, and that the right interface for a probabilistic model is four plain-language states, not a data layer.

What makes this project worth writing about is the process. Sunquà was designed through a workflow that does not fit the standard sequence of research, strategy, design, handoff. Product decisions, technical architecture, feature scope, and UX logic were developed conversationally with AI before a single Figma frame or line of code existed. That shift, using AI as a thinking partner throughout rather than only as a production tool at the end, is the thread that runs through this case study.

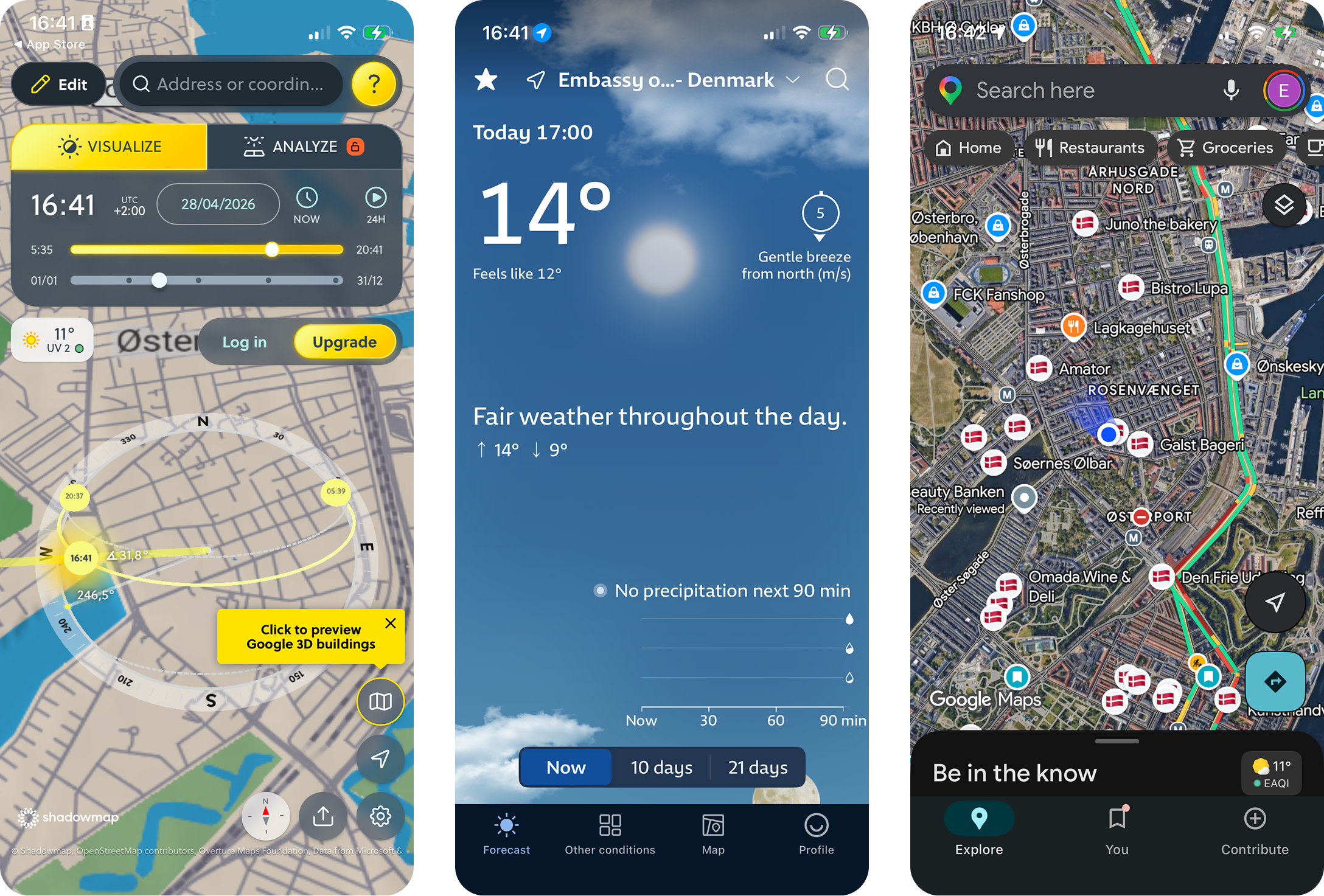

Take ShadowMap's data, which is built for architects and urban planners, and reframe it for a person standing on a corner deciding where to get a beer. Same underlying data, completely different product. That reframing was the whole project.

ShadowMap shows shadows but expects you to interpret them. Weather apps confirm the sun is out somewhere. Google Maps shows venues but knows nothing about light.

The gap was specific and small: turn solar geometry, and building data, into a recommendation a person can act on without learning anything.

Two things, layered.

First, accuracy. Sun position math is solved. The problem was that “sun position at the venue” is the wrong question. The right question is “sun position at the actual outdoor seats,” which can sit ten metres away from the front door and behind a different building. A model that's technically correct at the wrong coordinate is worse than no model at all, because it produces confident, wrong recommendations. The accuracy bet had to be made at the data layer, not the calculation layer.

Second, communicating uncertainty without losing trust. The underlying model deals in probabilities and partial states. Surfacing that complexity to a person standing on a street corner would defeat the product. Hiding it would lie. The interface had to feel confident without claiming a precision the model couldn't back up.

Product definition came first, in conversations with Claude over several long sessions. Two user types, one core promise, four interaction states, and a roadmap built around proving the hardest thing first rather than the most visible thing.

The hardest thing was not the map or the UI. It was whether terrace-level sun recommendations would be accurate enough that people would trust them and act on them. So the first work was a curated venue schema and a rough working model to test the math, not a polished screen.

From there, implementation moved to Codex, building outward from the recommendation layer to the map, the venue detail, and the trust features. Each step had a single product question attached. Nothing shipped until that question was answered.

Visual 2 — Sunny now

Custom Figma sun marker icon with its illustrated character. One line of plain language below.

Visual 2 — Sun coming soon

The 'arriving' state of the marker.

Visual 2 — Getting shady

The 'about to lose the sun' state.

Visual 2 — In shadow

The 'fully shaded' state of the marker.

Visual 3 — the roadmap

The phased delivery shown as a horizontal flow. Strip technical jargon.

Each phase had a single product question attached. Ship nothing until that question is answered.

The default move when building anything venue-based is to use the venue's address as the coordinate. Address is what Google Places, Foursquare, and OpenStreetMap return. It's what every mapping tutorial assumes.

It's also wrong for this product. A venue address is a building entrance. The terrace can be on the other side of the building, in a courtyard, around a corner, or across a small square. Sun at the entrance and sun at the seats are routinely different states. Using the address coordinate would mean the app is confidently wrong on a meaningful percentage of venues, which is worse than no app at all.

Every venue in the dataset has a hand-placed terrace coordinate, identified through site visits, satellite imagery, and manual review. That single decision is the reason the recommendations work. It's also the reason the dataset is small and Copenhagen-only. Coverage was deliberately traded for accuracy, because in this product, accuracy is the product.

Visual 4 — terrace coordinate explainer

A diagram showing one venue with two pins: a grey pin at the building address and an orange pin at the terrace. Sun-line indicators showing different states at each point.

The address says the venue is sunny. The terrace says it isn't. The terrace is right.

The model under the hood deals in solar geometry, building occlusion, and time-of-day variance. None of that helps someone choose between two cafés in the next ten minutes.

The user-facing language collapses all of it into four states: Sunny now, Sun coming soon, Getting shady, In shadow. These drive every surface in the app: the map markers, the venue cards, the per-venue timeline, and the filters. The states are decisions, not measurements. They smooth over the messier reality of the model while still giving the user enough to act.

The Spots/Shades toggle sits on top of this as a verification layer. Most users live in Spots view, where the four-state language does the work. Users who don't trust a coloured dot can switch to Shades view, which renders the actual building shadows on the map. The verification view was framed deliberately as a trust feature, not a technical feature. Its purpose is to let users prove the recommendation to themselves rather than just believing it.

“I just want to know where the sun is.”

Visual 6 — Spots view

Phone screen with the four-state markers on the map. The everyday view.

Visual 6 — Shades view

Same area, with the live shadow overlay rendered on the map. The verification view.

Same area of the map, two different ways of seeing it.

Visual 7 — onboarding v1

The first version, built from a technical spec. Copy: 'Map-first venue states using sun data.'

Visual 7 — onboarding v2

The revised version. Copy: 'The sun is out somewhere. Go find it.'

The first version explained the architecture. The second version named the feeling. The product worked the same. The onboarding worked completely differently.

Shipped to the App Store, Copenhagen-only, with a hand-curated dataset of bars and cafés. The accuracy bet appears to hold against informal use. The four-state language reads correctly to people without explanation, including people who have never used a sun-tracking tool. The Spots/Shades toggle is used less often than I expected, which is the right outcome: the four-state recommendation is doing its job, and the verification layer is there for the moments when it isn't.

The most interesting product moment in the whole project wasn't a feature. It was the onboarding rewrite. The first version, built from the technical spec, explained the app in architecture terms. The rewrite started from the emotional moment (“the sun is out somewhere”) and moved every feature explanation to screen two. Same product, completely different first impression. That rewrite reframed how I think about the gap between what a product does and what a product is for.

Visual 8 — validation findings

Four insight cards in a grid. Plain text cards, not screenshots. Headlines: 'Four-state language reads without explanation', 'Verification view used sparingly, by design', 'Terrace coordinate accuracy holds in use', 'Onboarding rewrite changed first impressions, not the product'.

The roadmap is shaped around three product questions, each one a step the v1 deliberately didn't take.

The current recommendation is geometry-only. Adding live cloud cover from Open-Meteo would make the model honest about the most common reason a sun-line doesn't deliver. This is the next feature, and the most important one for accuracy.

Sun alerts for saved venues turn Sunquà from a thing you open into a thing that tells you. Premium feature, opt-in per venue.

The behaviour around finding sun is rarely solo. Friends, shared planning, and group time slots are on the roadmap as a separate product question, not a feature add. They reframe the core loop.

Beyond that, a venue partner dashboard and expansion to other cities sit further out. Both depend on the curated dataset model holding up, which is itself the question the v1 was built to answer.